Lead Time to Value

Measuring the Full Pipeline in the Agentic Era

A guest talk from the spring cohort of Elite AI-assisted Coding.

In a recent session of Elite AI-assisted Coding, Eleanor hosted Greg Ceccarelli and Jake Levirne, co-founders of SpecStory and Stoa. The discussion centered on a critical challenge emerging in the age of AI-assisted development: as coding agents drastically compress the time required to write and deploy software, the primary bottleneck in the software development life cycle (SDLC) has shifted upstream.

The focus of the talk, supported by their presentation slides, was on identifying, measuring, and optimizing what happens before the first line of code is ever written.

The Shifting Bottleneck in Software Delivery

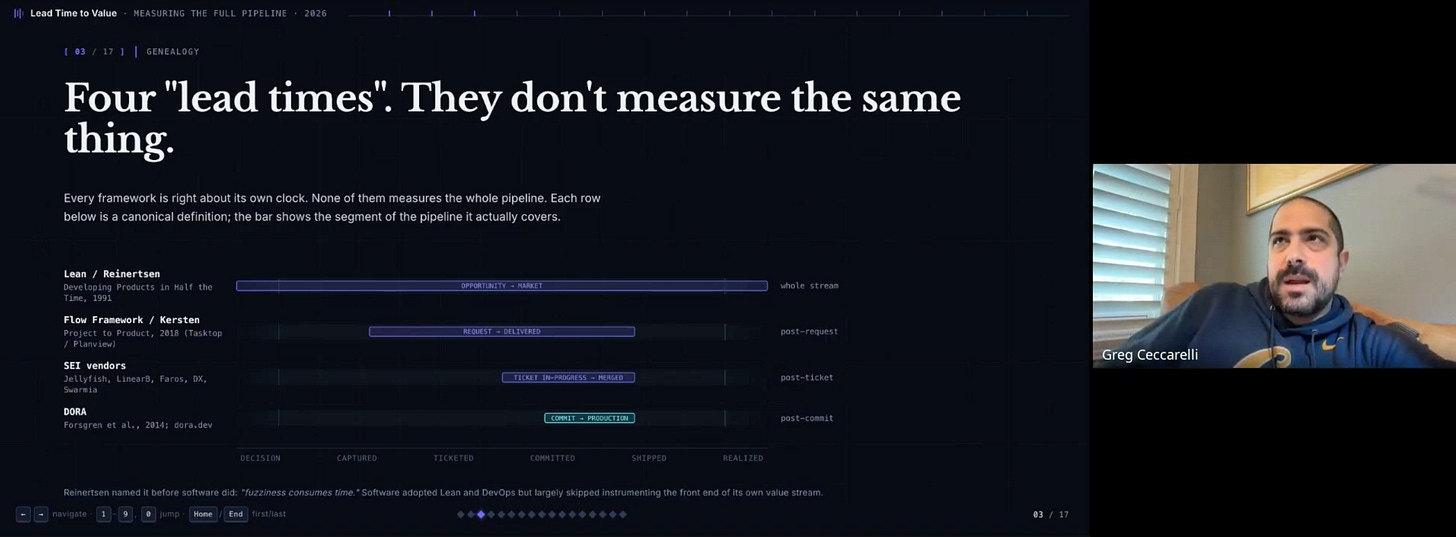

For the past decade, the software engineering industry has obsessed over optimizing the implementation and deployment phases. Frameworks like DORA (DevOps Research and Assessment) established metrics such as lead time for changes, deployment frequency, mean time to recovery, and change failure rate. However, as Greg pointed out, these metrics possess a significant blind spot: they generally start the clock at the first code commit.

“I think what’s interesting is everyone has started to play with and incorporate agentic development into their workflows that the things in the past that you might have put a lot of emphasis on like the time from something being first committed to a repository to the time that it is released to production for your customers to maybe experience have been changing.”

With the advent of coding agents and mature CI/CD pipelines, the time to implement features has collapsed from days or weeks to mere hours, and deployment times have shrunk to minutes. Consequently, the new dominant latency in the SDLC lies in the “fuzzy front end” — the period from when an opportunity or idea is identified to when a project is actually committed to and defined.

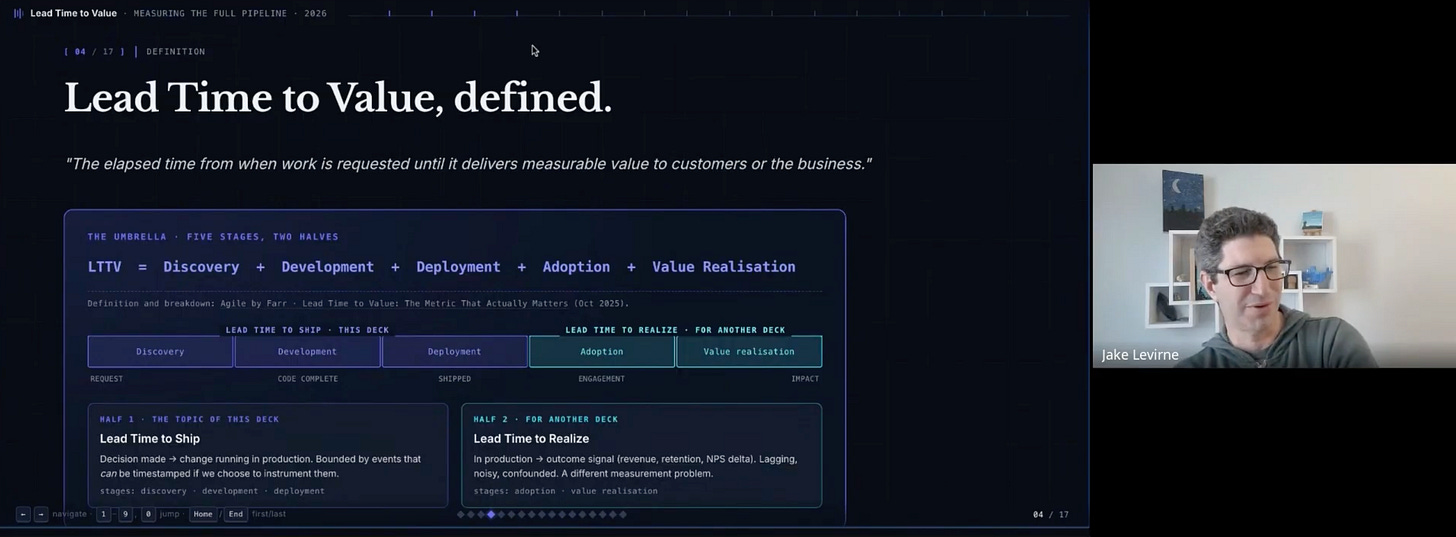

Greg introduced the concept of Lead Time to Ship, which encompasses discovery, development, and deployment. The goal is to understand how fast a decision made on a Monday translates into tangible value that a customer can experience.

Unpacking Intent Lead Time

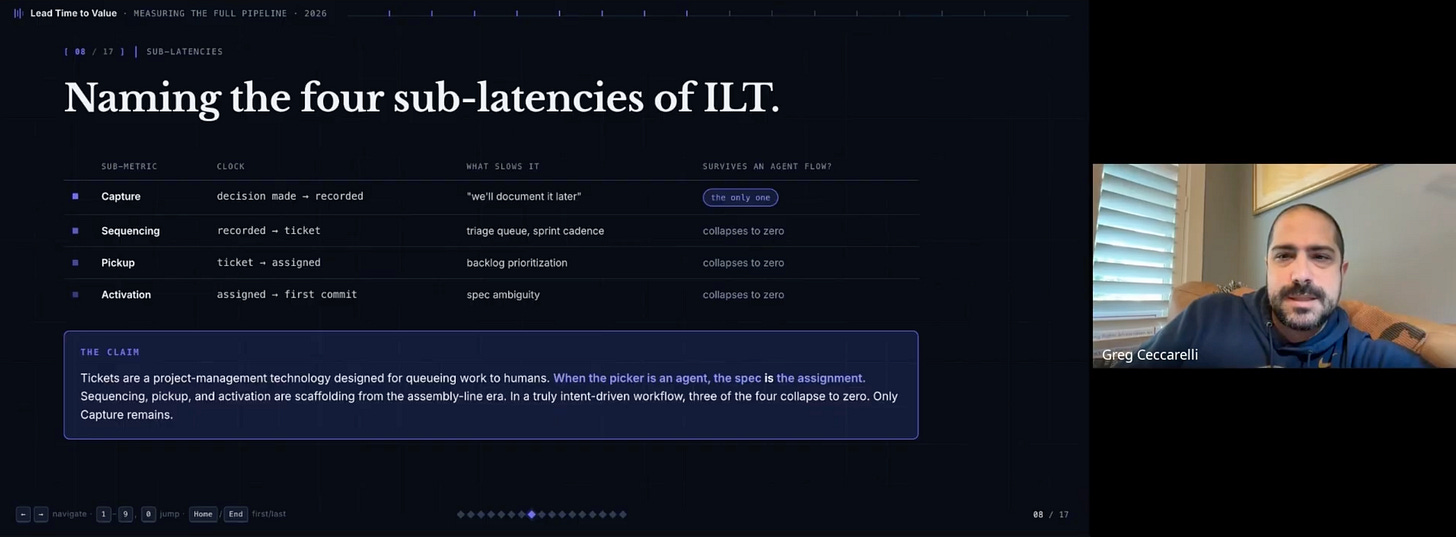

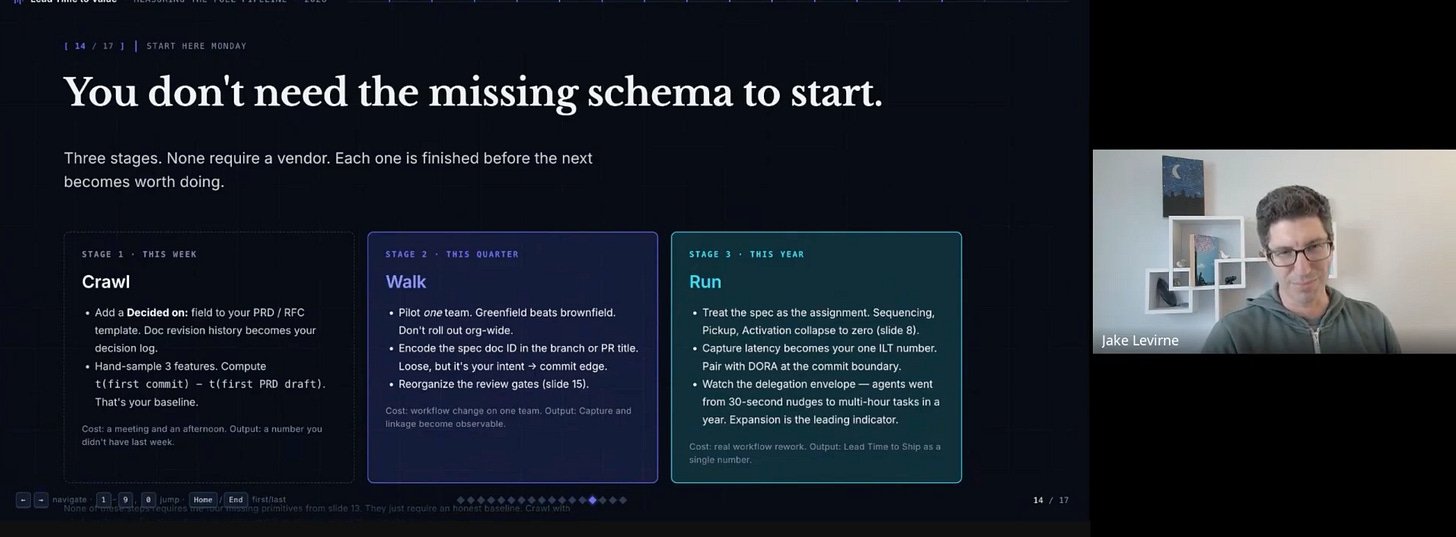

To address the upstream bottleneck, the presentation defined a new metric: Intent Lead Time. This represents the pre-commit portion of the Lead Time to Ship, calculated as the time delta between when a decision is captured and when the first commit is made.

Greg broke down Intent Lead Time into four sub-latencies:

Capture: The time from a decision being made to it being formally recorded.

Sequencing: The time from a recorded decision to a ticket being created and prioritized.

Pickup: The time from a ticket existing to it being assigned to an engineer.

Activation: The time from assignment to the first actual commit.

In traditional workflows, these steps can take weeks. However, the introduction of AI agents fundamentally alters this topology.

“In our minds though, over time, and I don’t know if people on this call have experienced this, you know, when we’re building product internally at SpecStory, it’s almost like these three steps collapse. Like sequencing, pickup, and activation almost collapse.”

When a team can move directly from a transcribed call to an agent spinning up a prototype, the traditional ticketing and assignment phases become obsolete. The remaining friction point is the initial capture — transforming an ambiguous conversation into a structured specification that an agent can act upon.

The Challenge of Specification Validation

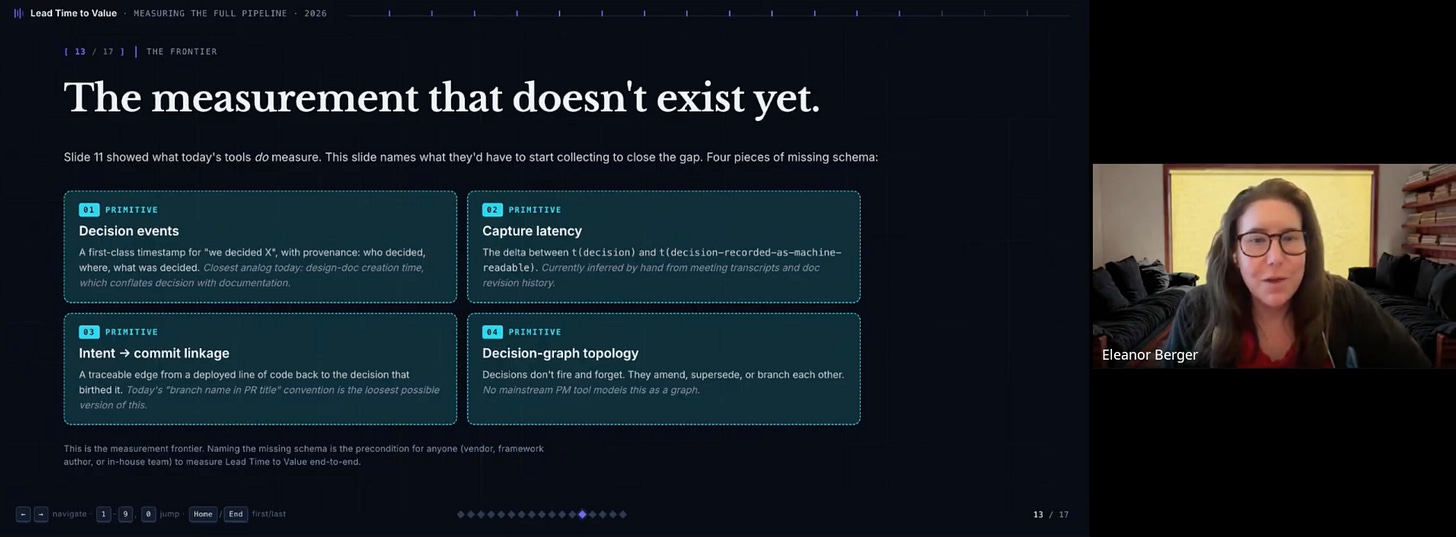

During the session, Eleanor raised a pivotal question regarding the verification of the intent phase. If code is increasingly just a derivative of the specification, measuring code commits becomes less interesting. The real challenge is determining when a specification is actually ready and trustworthy.

“I have no idea how to verify unambiguously that a specification is ready. That’s the thing I want to measure, right? Like when did they get to a specification that I can actually trust?”

Jake addressed this by highlighting the inherent non-determinism of product development.

“If you frame it up as like, you know, how do I know my specification is correct and complete? You don’t. Right. I think what’s happening is that this is exposing more people in the industry to the realization of the non-determinism of getting your spec right because it’s always been a problem. It’s just that we’ve found other ways to work around it.”

The solution is not to seek a perfect specification upfront, but rather to utilize agents to shorten the cycle times between market feedback and product iteration. Greg emphasized that agents allow teams to generate multiple high-fidelity prototypes rapidly, facilitating immediate validation with customers on calls, rather than relying on abstract discussions or low-fidelity wireframes.

When a student asked about the risk of customers mistaking high-fidelity prototypes for production-ready software, Jake clarified their team’s philosophy: they do not separate prototypes from production. All artifacts are treated as software delivery that is expected to be continually improved.

Leveraging Synthetic Data for Warm Starts

Aaron inquired about the potential of using synthetic data generation as a “warm indicator” to help build out specifications before they meet real users.

Jake provided a compelling example from a healthcare product consultant who uses Large Language Models to generate synthetic users based on target personas.

“He uses those synthetic users to test his spec, not to test his software. And so like he will create a set of these users that match his given personas and then he’ll start clean like clean sessions, clean context and he’ll just feed in the synthetic user and his spec and look for feedback.”

While acknowledging the challenges of ensuring synthetic personas are sufficiently distinct and salient, Jake noted that this approach provides a valuable “warm start,” potentially elevating the baseline of user feedback loops long before live customer testing occurs.

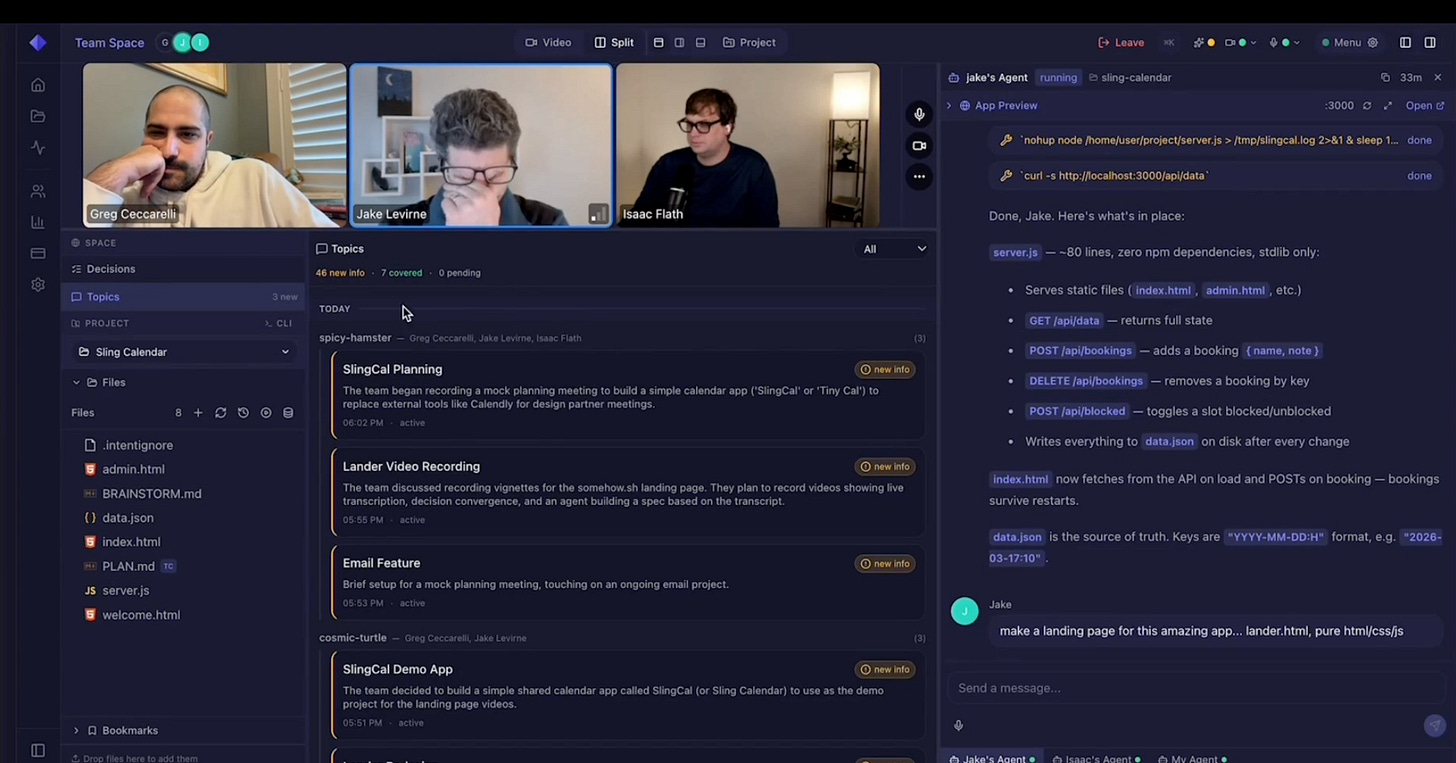

Real-Time Collaboration with Stoa

To address the logistical friction of gathering feedback and iterating on specifications, Jake and Greg introduced their new platform, Stoa. Designed for the agentic era, Stoa operates as a multiplayer collaborative environment that blends video conferencing, document editing, and shared AI coding agents into a single, durable workspace.

“So the crudest way to think about it is it’s like Google Meet meets Google Docs meets shared Claude Code agents meets instant everything’s versioned all jammed in one.”

In practice, this means a team can be on a call, discussing a feature, while an integrated agent transcribes the conversation, distills the decisions, and actively modifies the prototype or codebase in real-time. Because the environment is durable, the context — including transcripts, files, and agent history — persists after the meeting ends, eliminating the context evaporation typical of disjointed modern tooling.

Key Takeaways

The session highlighted several profound shifts in how engineering teams must operate to maintain a competitive advantage:

Focus on Intent, Not Just Implementation: With implementation times collapsing, competitive advantage now lives in the “fuzzy front end.” Teams must optimize how fast they can capture decisions and validate ideas.

The Collapse of the Ticketing Pipeline: Agentic workflows bypass traditional sequencing and assignment phases. The goal is to move directly from a captured decision to a committed prototype.

Specifications over Code: As code becomes a derivative product of AI agents, human authority and effort must migrate upward to focus on intent, judgment, and the authorship of robust specifications.

Real-Time Validation is the New Standard: The ability to build and modify software live during customer or team calls drastically reduces feedback loop latencies, rendering asynchronous prototyping cycles obsolete.

Durability of Context is Critical: The tooling of the future must centralize and persist the context of decisions, transcriptions, and agent actions to maintain a shared mental model across the team.

The agentic era does not eliminate the need for human engineering; it merely shifts the human focus from the mechanics of typing code to the rigor of defining value. As Greg concluded, those who engineer their processes to navigate this new paradigm will fundamentally outpace their competitors.