How can I compare and identify the best AI models for my agentic tasks?

Q: How can I compare and identify the best AI models for my agentic tasks?

With new AI models being released almost daily, featuring continuously improved capabilities and performance, selecting the best one for your specific needs can be challenging. To effectively evaluate and choose the right model, you should rely on a combination of independent benchmarks, crowdsourced testing, and personal validation.

Here is a structured approach to evaluating and selecting AI models:

1. Artificial Analysis

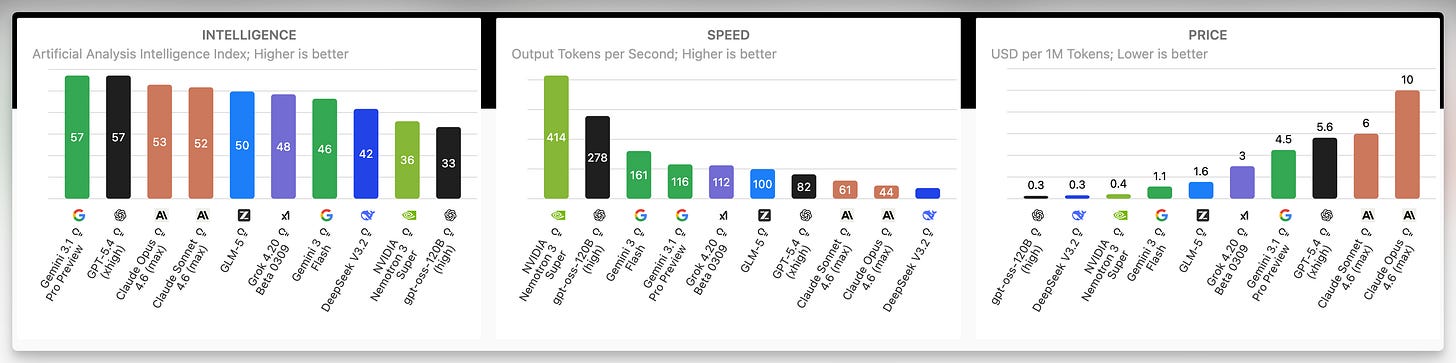

For a high-level overview of currently available models, Artificial Analysis is an excellent starting point. They conduct their own independent testing rather than relying on the metrics published by model providers, which lends their results significant credibility. This resource is particularly useful for evaluating the high-level trade-offs between:

Performance: How well the model executes standard benchmarks and general tasks.

Cost: The price per task, token, or API call.

Speed: The latency and throughput of the model during inference.

2. Arena

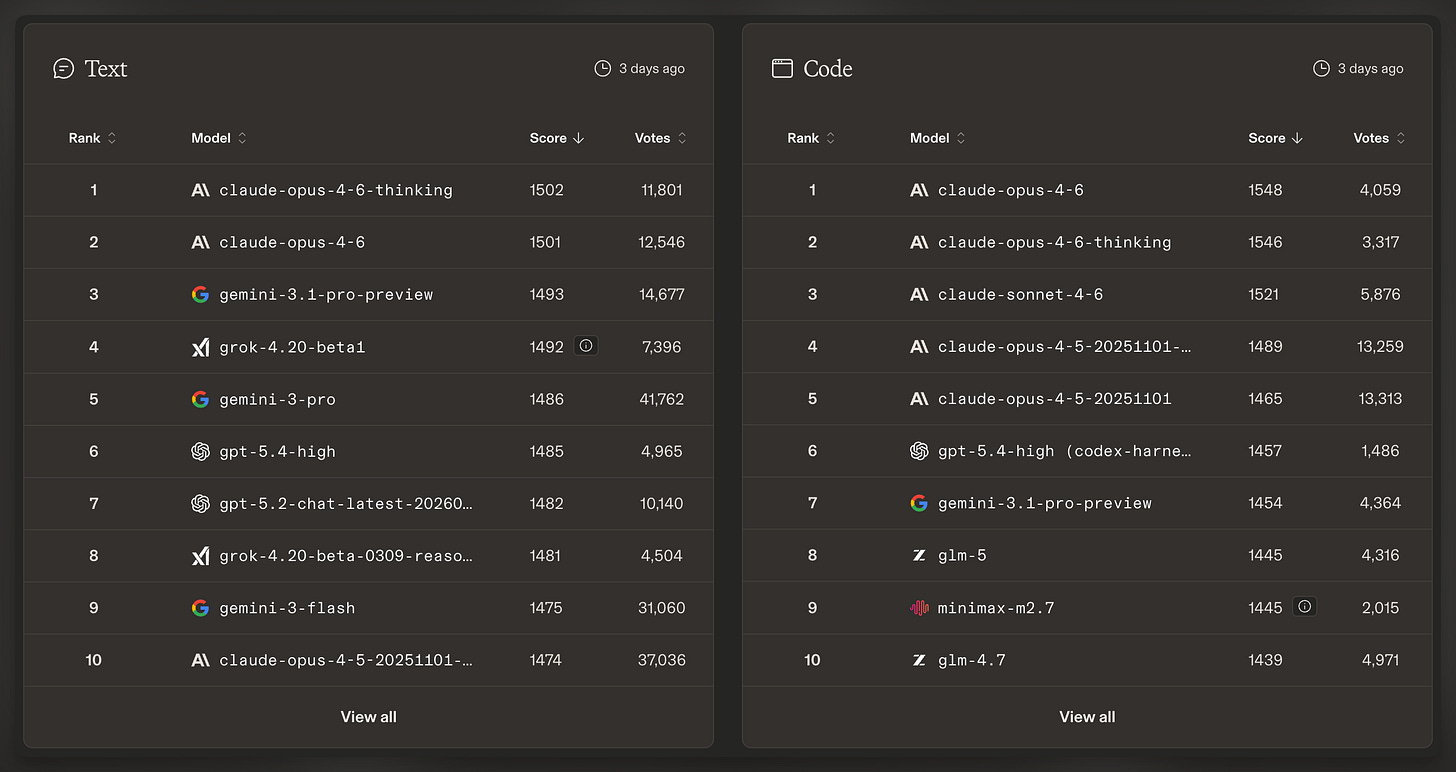

Formerly known as LM Arena, Arena takes a dynamic approach to model evaluation. Instead of using a fixed set of benchmarks, it hosts a competitive league where models are pitted against each other.

Crowdsourced Blind Battles: Users conduct blind tests by prompting different models with the exact same task and voting on the best response.

Real-World Coverage: Because users have infinite imagination for new applications, this method captures a much wider array of use cases than static benchmarks — a crucial advantage for evaluating models.

Often, your own intuition about a model’s strengths and its optimal use cases will align closely with the crowdsourced consensus found on Arena.

3. Personal Testing

Most importantly, public testing platforms and data can only take you so far. To truly determine which model works best for your specific application, you must conduct your own testing. You should approach this phase strategically:

Run Familiar Tasks: Maintain a baseline set of tasks that you have already processed with several older models. Because you know what to expect from these prompts, running them with a new model will give you a precise understanding of how its behavior compares to its predecessors.

Retry Past Attempts: Always revisit complex tasks that previous models struggled to complete. The enhanced reasoning capabilities and improved performance of the latest generation of models frequently unlock solutions that were previously impossible to achieve.

General Guidance

When researching a new model for your agentic tasks, start by checking Artificial Analysis for empirical trade-offs, consult Arena for crowdsourced performance in real-world scenarios, and finally – and most importantly – conduct rigorous personal testing to validate the model against your specific requirements. Public data informs your intuition, but personal testing verifies the reality of the model’s capabilities in your production environment.

…

Looking to learn how to use AI agents effectively for software development? The spring cohort of Elite AI-Assisted Coding, the #1 comprehensive course on making the most of agentic software development, is open for signups. Join us!